In January, TABPI conducted a survey of b2b (trade publication) editors from across the world. The survey asked questions about how Artificial Intelligence products, such as ChatGPT, are being used in editorial and design work, whether they are effective, and what the future may hold for the industry.

In all, 43 editors responded to the online survey form. Roughly 44% were in an Editor in Chief or lead editorial role. Editorial Director, generally a title given to an editor who supervises multiple publications at a company, represented 19% of the respondents, while 16% described themselves as being in a Managing Editor role. The remaining people were focused on other editorial or design roles.

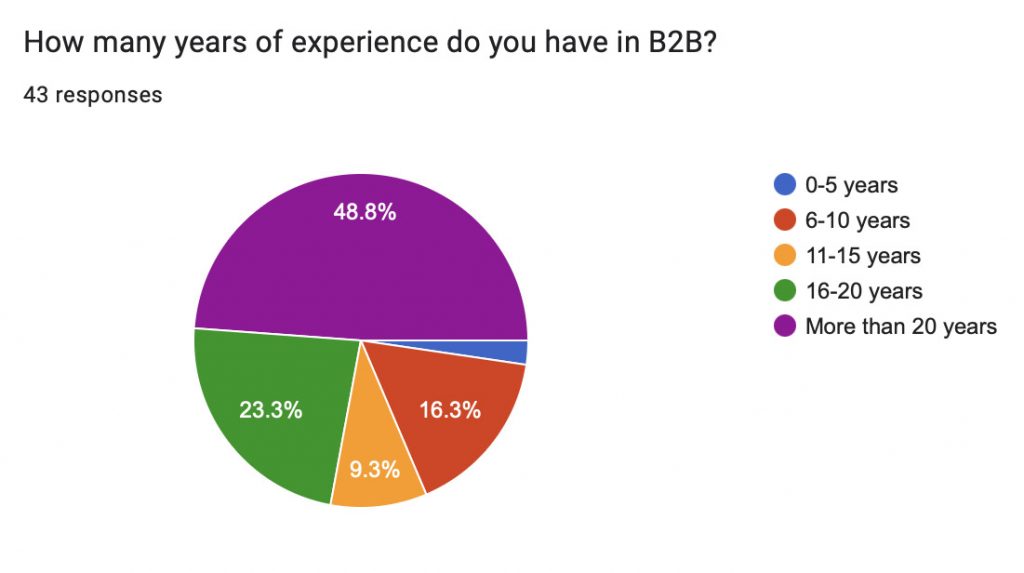

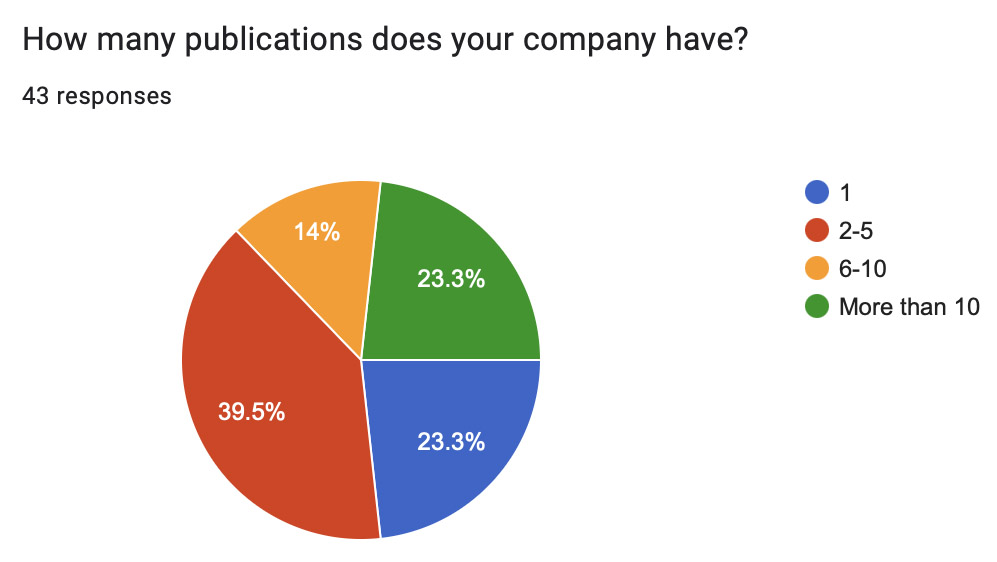

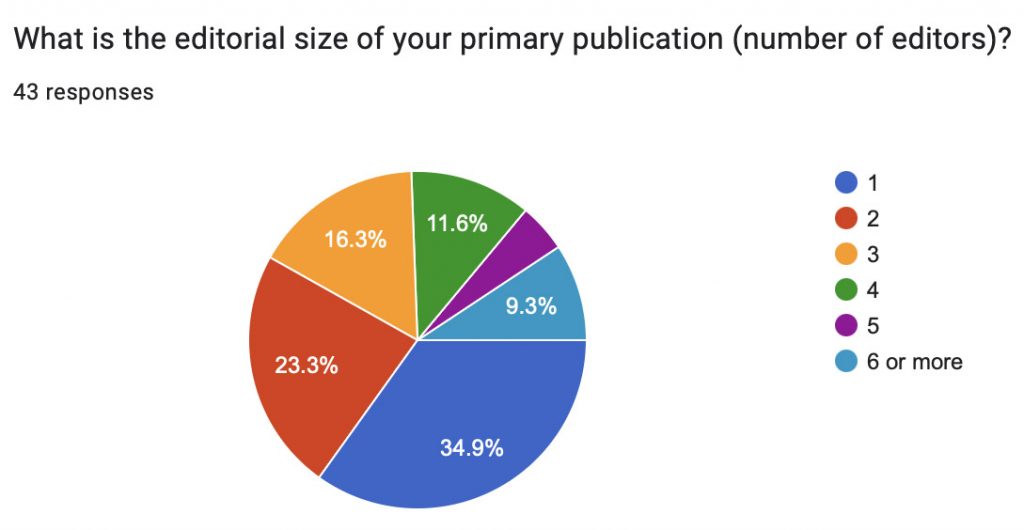

Roughly half of the respondents were people with more than 20 years in the industry, with only about 20% working in the b2b publishing world for 10 years or fewer. The companies represented in the survey were most likely to have 2-5 titles (40%), more than 10 titles (23%), or only a single title (23%). Most publications (58%) represented had only one or two editorial staff members, while 14% had five or more editors on staff.

AI is certainly making inroads into the b2b publishing world, as is the case for so many industries. Currently, 61% of respondents said that it was being used in either editorial or design processes, even though ChatGPT itself is roughly 14 months old and DALL·E 3, the image-creating AI, is a mere 5 months old.

AI is certainly making inroads into the b2b publishing world, as is the case for so many industries. Currently, 61% of respondents said that it was being used in either editorial or design processes, even though ChatGPT itself is roughly 14 months old and DALL·E 3, the image-creating AI, is a mere 5 months old.

Using AI

One platform being used wasn’t at all surprising: ChatGPT was mentioned the most as a tool for editorial staffs. Grammarly, Bard, and Photoshop also had multiple mentions. Photoshop’s AI tool for expanding photo images is being used for a variety of tasks, such as to fit pre-set photo sizes for newsletters. And AI is being used in many applications that editors are relying on. Otter.ai, a California-based company that has developed a speech to text transcription app, is growing in popularity, pushing past human-centered transcription services like Rev.com. (Rev.com has now introduced its own AI transcription service.)

Other tools being used include MIDJourney, Miso.ai, Claude, Adobe Firefly, Descript, Pictory, and the DALL·E AI image creating software.

Other tools being used include MIDJourney, Miso.ai, Claude, Adobe Firefly, Descript, Pictory, and the DALL·E AI image creating software.

So far, respondents say that the use of these platforms is not changing how they’re using other tools, and much of the work they are doing at this point is trial-and-error.

“We’re mostly experimenting and seeing too much made-up and mediocre results to rely on genAI. The verification and correction is extremely high. We do see some limited utility in using summarization capabilities such as for social posts,” said one editor.

“We have implemented Miso.ai on some sites for readers to ask questions from our content. It is OK for factual questions but can hallucinate on judgments and narratives, so we limit its scope,” explained another.

Many of the current tools were described to be spotty or unreliable, and editorial staffs are using them — but only with caution, and double checking the results.

This AI revolution is still quite new, but many b2b publications have been willing to be early testers, if not early adapters. While 37% said they don’t use AI in editorial or design processes, 39% have used it for three to six months and an additional 20% have done so for more than six months. Another 5% have been trying AI out for less than three months.

This AI revolution is still quite new, but many b2b publications have been willing to be early testers, if not early adapters. While 37% said they don’t use AI in editorial or design processes, 39% have used it for three to six months and an additional 20% have done so for more than six months. Another 5% have been trying AI out for less than three months.

Editors were asked in what areas of work AI is being used in currently, and the results showed a range of tasks that can make processes more efficient. More than 35% said that they were using AI for brainstorming article ideas for their publications and 32% reported using it for content generation. Next up was image creation or manipulation, with 29% reported use.

More than 20% of respondents said they relied on AI for content optimization, drafting potential interview questions, developing first drafts of stories, and help with SEO optimization. And 18% expressed that they also used AI for creating survey questions and doing analysis and insights of their content.

B2B journalism is nothing if not creative, and the survey found that editorial staffs are trying out AI applications for many additional tasks, such as: language translation, internal scheduling, headline generation and brainstorming, drafting social media posts, summarizing news releases, content curation and generating videos to summarize already written pieces.

B2B journalism is nothing if not creative, and the survey found that editorial staffs are trying out AI applications for many additional tasks, such as: language translation, internal scheduling, headline generation and brainstorming, drafting social media posts, summarizing news releases, content curation and generating videos to summarize already written pieces.

Impact and challenges

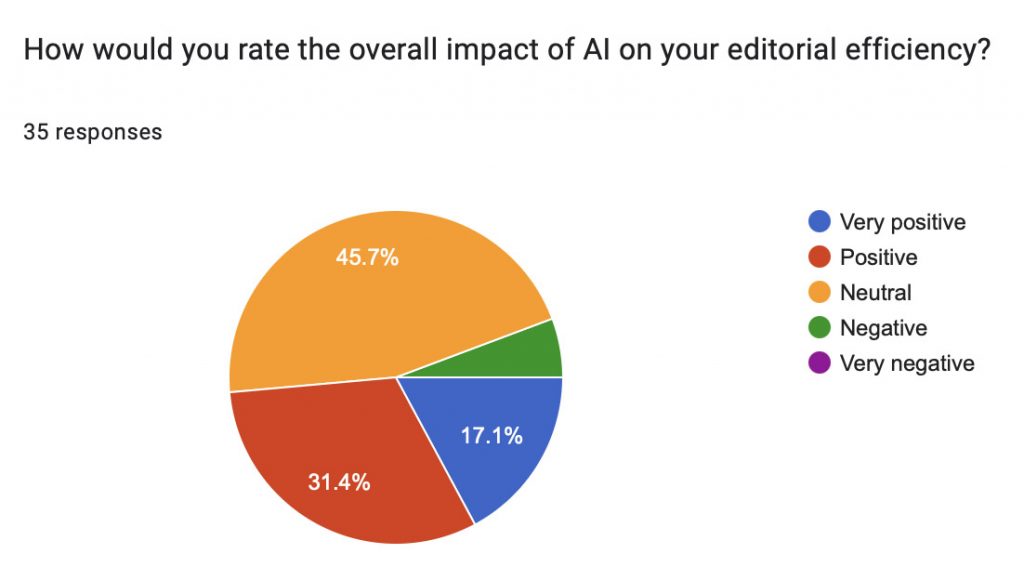

The jury’s still out on AI’s usefulness for our line of work, with fully 46% of respondents saying they’re neutral on the overall impact of the technology on editorial efficiency. Only 6% reported it as being negative, while 31% described it as positive and 17% saying it has been very positive.

Many of the editors who responded explained that their confidence in AI-generated content was not high, except when used for early drafts of ideas, generating brainstorming-type lists, or for overcoming writer’s block situations.

“The existing tools in other areas do not give us confidence that we would get better results than we get with a trained editor and lead us to suspect that our quality would potentially decline,” said one.

Authenticity and accuracy were areas of concern, as well — along with the ethics of using AI. Some people did cite the still-evolving issues of copyright and plagiarism and how society (and legal experts) have still not come to firm conclusions about whether AI is violating current laws.

“We are concerned about the theft of intellectual property that some of these tools seem to have relied on to build their databases. On a practical level, we are also concerned about the issue of crediting AI versus human contributions, as it is unlikely that any given work product would be the result of all one or all the other,” said one editor. “There are also well-documented examples of tools like Chat GPT embedding the prejudices of the broader society, given the undifferentiated sourcing of the training set.”

But others were not as fazed.

“I think the biggest ethical imperative is keeping up with generative AI tools knowing they are likely to cause significant disruption in the coming years to the field. Some editors seem to view AI with trepidation, but it can be limiting to view AI through a black-and-white lens, and more helpful to approach it with curiosity rather than assuming that it is mainly good or mainly bad. The internet is a good analogy — it has led to lots of problems, hacking, misinformation, etc., but also positively transformed knowledge accessibility,” said another respondent.

An issue relating to ethics that came up numerous times in the survey was concerning transparency. Many respondents mentioned that disclosure to the audience of how AI is being used at a publication was critical.

“Our company has a clear, defined AI policy, and when complied with, ethics are not compromised,” explained one editor.

Future use?

Given how new AI is in the editorial/design space, it wasn’t surprising to see that only 27% of the survey respondents said that they have received any corporate training on AI tools or technologies. It seems that most staffs are playing with the software and tools themselves — and feel they need to investigate the technology instead of waiting for management to decide it’s a necessary productivity tool.

Only 14% of respondents said that their organization was planning to invest more in AI for editorial purposes in the coming year. An additional 26% said the organization was not, and more than half (60%) were unsure of what was being planned at their company.

Stay tuned for Part 2 of this report, which will focus on insights into privacy and performance and recommendations for better use of AI.

[Full transparency: TABPI used ChatGPT to develop a first draft of the survey questions, which were extensively modified by a human editor before being finalized. It was also used to create the lead photo in this article.]

________________________________________________________

The 2024 Tabbies are now open for submissions!

The 2024 Tabbies are now open for submissions!

Enter here through March 14th.

Pingback: AI in the newsroom: A global perspective from b2b editors, Part 2 – Welcome to TABPI